WEEKLY HIGHLIGHTS 2025 SECOND HALF

Radio Atlantic podcast “He’s Undocumented. She’s Not” and Turning 60

I recently listened to a podcast by the Atlantic, which literally brought me close to tears. The storyline sounded like a movie script, yet it was real.

It was the story of a couple living in Chicago. The woman is a US citizen, while the husband is undocumented.

The husband, together with his mom, immigrated illegally from Poland into the US when he was about 6 years old. Hence, he grew up like a normal kid in the US, but with a Polish passport and an illegal residency status, not through any fault of his own. Because he entered the US illegally as a child, he never had a chance to obtain US residency or citizen status.

This naturally had major consequences for his life. Not only could he not travel out of and back into the country, he also constantly had to worry about being found out when being stopped by the police for simple things like driving too fast.

What then followed was the moving story of how the couple gradually recognised that their only possible path to a normal life was emigrating to Poland.

What made deciding to move to Poland so difficult were the consequences of this decision. The husband knew that by taking this step, he would burn all bridges and could never come back to live in what had become his home country. The wive would be starting a new life in a country about which she did knew nothing, in which she had no friends and did not speak the language.

Starting anew in life is scary. But at the same time, building a new life seems exciting.

Overcoming difficulties often requires boldness, where we leave what we have behind, in exchange for the prospect of finding a better life. This is usually hard, because we know what we lose, but cannot imagine what we might gain and what our life under the new circumstances will be like. As a result, we tend to disproportionally overestimate the value of what we have.

Naturally, there are no guarantees that changing a specific aspect in our life will help us overcome our difficulties. Yet, in most cases, we end up much better off, at least subjectively, because our brain is able to adapt to new circumstances much better than we think. We can often tolerate things that we imagine as intolerable. Moreover, what I have discovered time and time again is that after abandoning something that seemed important, it becomes much less important.

This brings me to a major turning point that I will face in a few years and that I am reminded of this week while turning 60. In a few years, I will reach my retirement age.

The first thing that comes to mind is that the thought of reaching my official retirement soon seems surreal because I do not feel significantly different from how I felt twenty or thirty years ago. I now need glasses to read and I run a bit slower than when I was young. But I can still do experiments (and often better than my students), and I feel that I am better at most other things than I used to.

At the same time, I strongly agree that from a societal and university point of view, retirement is a good thing. There are so many new and talented, and often more capable, young scientists who have or want to take on roles to contribute to research and make a difference to our society. Senior professors like me should not stand in their way.

What is more, I believe that retirement is also a good thing from a personal view.

I used to dread this time in my life because I was afraid of no longer feeling needed. But now I look forward, not despite, but because of the uncertainty of what this will bring. I look forward to changing my focus in life and spending my days entirely different. It feels like a new start with many opportunities.

Just like for the American-Polish couple, being forced to make a major change in life can be a gift.

HIGHLIGHTS FOR WEEK OF 22 – 28 DECEMBER

Christmas in Germany

I spent a week with my parents in Germany, which was wonderful, except for the outdoor temperatures …

HIGHLIGHTS FOR WEEK OF 15 – 21 DECEMBER

On personal improvement

This month I have spent much time on personal improvement. After radically minimising my wardrobe and belongings almost two years ago, I have realised that there were still many things I did not wear or use since then, prompting me to conduct another major clean-out.

One thing that this clean-out made me think about is how much waste many people (including myself!) generate by buying unnecessary stuff. The tendency of people to keep buying stuff explains why the huge rubbish containers outside my HDB block seem to be constantly overflowing.

Considering that all this waste needs to be disposed is horrifying and makes me feel guilty. I hope that these feelings of guilt will make me more conscious before buying new stuff in the future!

On a personal level, though, having gotten rid of lots of stuff always feels great. Paradoxically, I usually do not anticipate this. To the contrary, I expect that by getting rid of things, I will experience a great loss. This is of course precisely what makes it so difficult to dispose of our belongings in the first place.

There are likely a number of reasons for the discrepancy between our anticipated and actual feelings after departing from things we own, including that our possessions tend to be less important to us than we think and that it is hard imagine what it might be like without them. In addition, I believe that our brain quickly adapts to the new reality of no longer having certain things.

Minimising possessions feels good because I find it wonderful to look at non-cluttered spaces. Moreover, it makes me waste less time thinking or worrying about my possessions and maintaining them, which in turn keeps me focussed on the things that are important to me.

Just as important as minimising things we own (and trying to maintain them at a minimum) is eliminating mental distractions related to the things we keep and avoiding preoccupation with accumulating new possessions. In fact, I feel that it is less about the amount of things we have, but more about how much time we spend thinking about them and about acquiring more. As such, for me minimalism is less about having a minimal amount of things, but more about thinking less about material things.

Not thinking about material possessions tends to be more difficult than getting rid of things, at least for me. One reason is that I seem to be a rather obsessive person, who once obsessed by a thought, finds it hard to let go.

As I have discussed before, one major obsession throughout my life has been collecting records. Notably, at present, shortly before the holidays, I have no records lined up on my internet browser tabs that I plan to buy. This may present a unique opportunity to make a change, by trying not to add new records to my collection.

Based on my experience, actually accomplishing this would involve a number of changes in my mindset and behaviour, all of which seem rather difficult!

One approach would be to convince myself that it is not worthwhile to keep searching for new music and buy more records.

A second approach is to minimise exposing myself to triggers that prompt the search for new records.

Finally, it would be helpful if I could overcome the natural human urge to constantly seek novelty.

Regarding the first point, I previously discussed the approach to tell myself that it is unlikely that I will discover records that give me more satisfaction than those that I already own. The good part is that this is actually true. Nonetheless, the excitement for potentially discovering something new, and owning it, is difficult to extinguish. I will come back to this below when discussing the third point.

For everything we do but did not plan to do, there tends to be a trigger. Trying to minimise my exposure to potential triggers of unwanted behaviour can be quite effective. In the past, I have changed certain routines, for instance by committing to no longer visit certain websites or completely forgoing a habit, often with remarkable results.

Avoiding certain websites eventually leads to our brain forgetting the habit. However, what I have noticed is that by killing one habit, another one tends to crop up. The reason for this is that our (or at least my) brain, at times of idleness, seems to constantly search for novelty, which brings me to the last point.

In the end, it is the ultimate goal to kill the desire for seeking novelty. The reason why we seek novelty likely lies in the possibilty of discovering something exciting, despite knowing that this actually won’t make us any happier. In fact, it may do the opposite.

On the other hand, not exposing myself to novelty tends to make me feel good. For instance, I have recently rediscovered that it feels great to sometimes go to work without listening to music or podcasts because it allows me to notice other things, be more mindful and conscious about the things I plan to do and enjoy during the day. After all, being alive is about being present in the moment and not be distracted.

It seems that we have lost the ability to do nothing, which is the subject of a book by Jenny Odell, entitled “How to do nothing”, which I read this year and which made me want to become better at doing nothing. I have great hopes for my one month holiday after the end of the next semester (in a place that I have not, yet, decided on), which I plan to spend doing nothing (or at least very little).

Ultimately, I would like to reach a different mental state where the conditions to feel satisfied change, where just being and doing gives me satisfaction. This is indeed one of my goals for the coming year.

HIGHLIGHTS FOR WEEK OF 8 – 14 DECEMBER

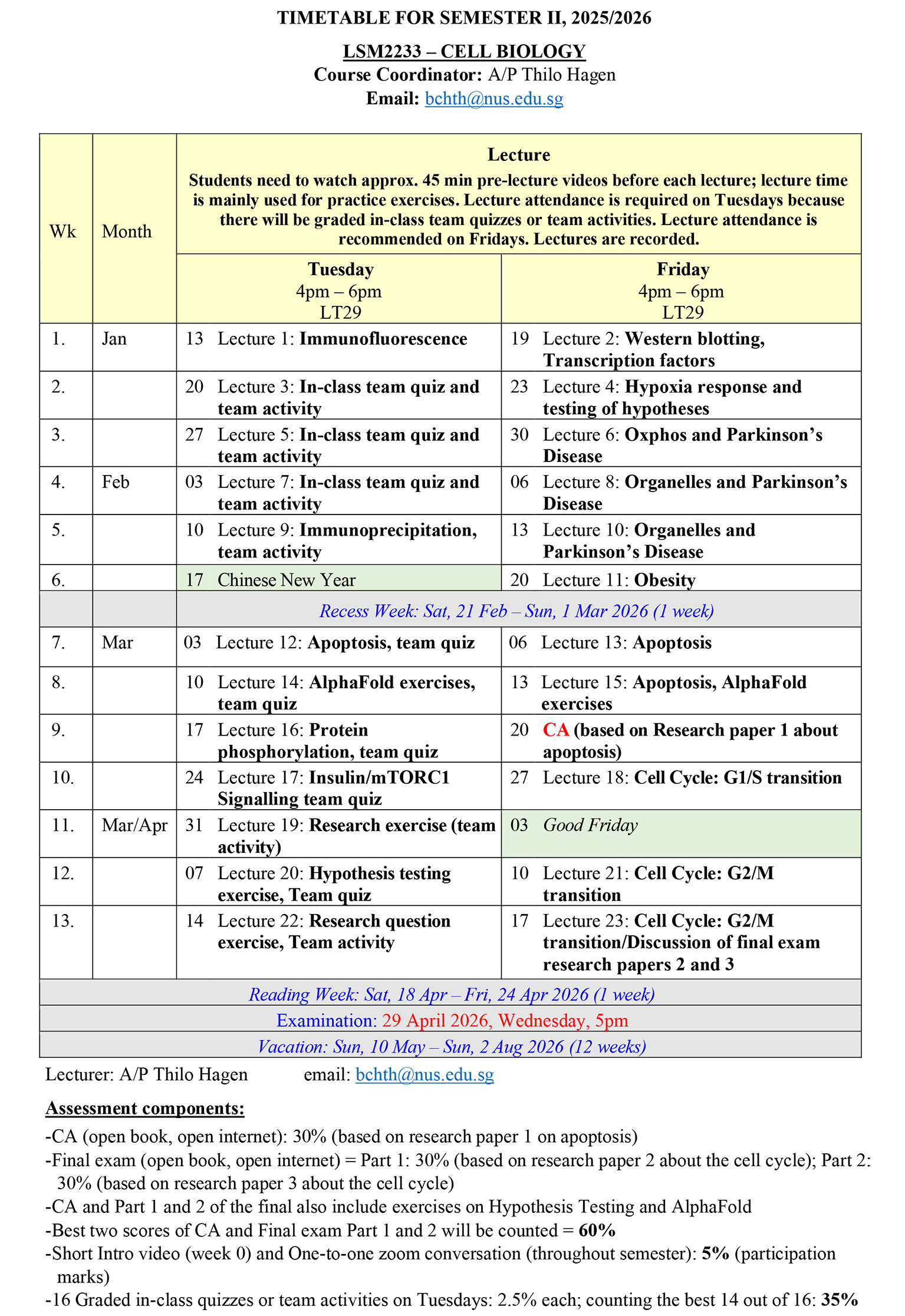

Finalising plans for LSM2233 Cell Biology

Last week talked about my new plans for my teaching in the coming semester.

While the things I have thought of seem exciting, my main worry is whether I will be able to actually design and carry out these activities in a well-coordinated and effective manner. It can appear a bit overwhelming, even though I have felt similarly before other semesters and in the end managed to put my plans into practice.

This week, I took a big step towards feeling a bit more at ease by planning the semester in detail, including the various activities I would like to conduct.

I also managed to hopefully overcome last year’s problem of disappearing images in the Learning Catalytics team-based learning platform, by identifying a (hopefully!) reliable image hosting website.

This means that I can go into my Christmas holiday without worrying too much.

HIGHLIGHTS FOR WEEK OF 1 – 7 DECEMBER

LSM2233 Cell Biology

Two weeks ago, I discussed my disappointment about my performance in our School’s postgraduate student workshop. What made things worse is that one student from the organising committee told me that she took my Cell Biology course during the Covid pandemic, but seemed not to remember any details.

The reason why this shocked me was because I personally felt that the course was amazing and must have stood out to students, too, especially during the pandemic. What I thought differentiated my course from most others during the COVID-19 period was firstly how truly interactive my lectures were. Secondly, there were the team-based learning quizzes, delivered via the Learning Catalytics platform, which I perceived as extremely engaging and fun for students.

Indeed, while writing a recommendation letter for my former student Diya, who took the course during the COVID-19 period, I discovered an old email that she sent me during the course, in which she wrote:

“I would like you to know that it is honestly such a pleasure being part of your class for this module. Even though sometimes the content can be quite challenging, I really, really appreciate the way you deliver it, and how you place so much more emphasis on learning rather than scoring. I find your lectures very exciting, and I want you to know that you have helped me rediscover my joy for learning!“

Reading Diya’s email reminded me of how much joy and satisfaction teaching can bring and made me feel excited about the coming semester.

Moreover, when I tried looking up the student who could not not remember my course during the Covid period, I could not actually find her name in the student list. Thus, perhaps I should not be too worried about this.

The thing that makes teaching most exciting, at least for me, is trying out new learning activities. In fact, I have spent the past few months thinking hard about what new activities I could introduce in the coming semester. However, it was only during the last weeks that some things finally materialised.

One goal I had was to introduce activities using AI tools other than chatbot-based exercises. Introducing such activities requires that I first learn myself how to use specific tools. Thus, I have invested time to learn how to visualise protein structures predicted by AlphaFold, using ChimeraX, an amazing and freely accessible programme.

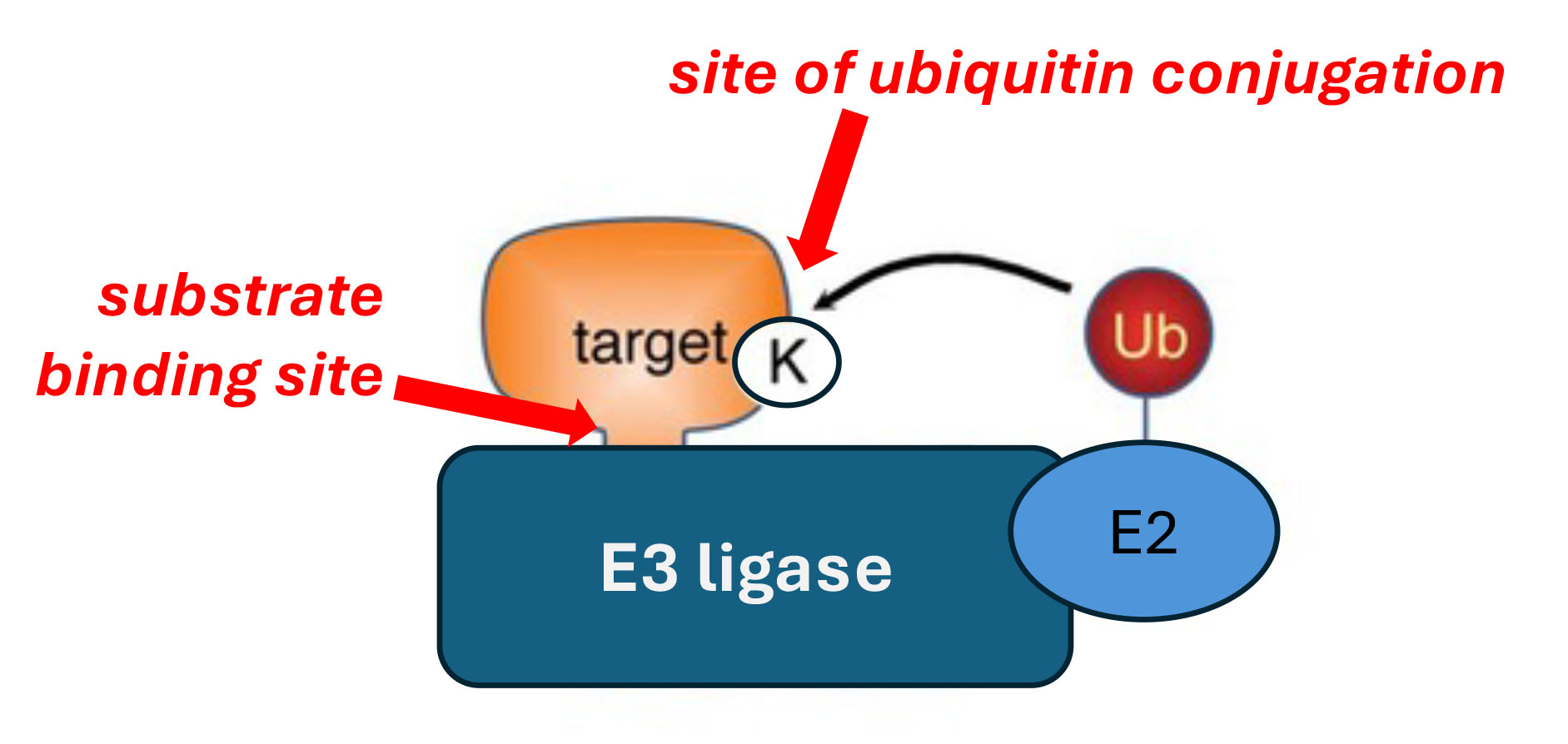

The next challenge was to then come up with interesting and meaningful exercises. My original plan was to let students pursue protein structure based research questions on the effect of protein ubiquitination on binding of substrate proteins to E3 ubiquitin ligases.

One question that I have been interested in for a long time is the binding between E3 ubiquitin ligases and their substrates, specifically whether E3 ligases can distinguish between protein substrates that are non-ubiquitinated and those that are already conjugated with ubiquitin. The reason is that the site within a substrate protein to which the E3 ligase binds is different from the site to which the ubiquitin polypeptides are attached. Therefore, it should in theory not matter whether a substrate is ubiquitinated or not. However, there may be factors other than the E3 ligase binding site that determine the interaction, such as steric hindrance effects.

However, this idea turned out to be infeasible because AlphaFold does not allow to model ubiquitinated proteins.

Nonetheless, I have managed (and am still in the process) to come up with other exercises in which students can use AlphaFold and ChimeraX to predict or explain the effect of mutations, protein modifications, or interactions with binding partners on protein structure and function.

I have also planned an exercise that involves identifying and validating interacting proteins using online search tools and databases and characterising structural aspects of the interaction using AlphaFold.

The reason why I feel excited about these exercises is because they resemble real research where students test hypotheses and make their own decisions, and where in some instances there is no predictable outcome and students may even find something new.

Another of my plans after the last semester was to introduce more creative thinking-related activities.

Firstly, I am planning to conduct activities in which students need to identify what is unexpected in given datasets. This is something I have done in the past and want to continue and expand in the coming semester. Over the past months, I have read many relevant papers to find good examples.

Another idea is to let students come up with a research question. I have tried similar activities in previous semesters. For instance, in one semester I discussed an example of a good research question and then asked student groups to come up with their own research question about any phenomenon they have encountered. Subsequently, the groups had to try to answer their research question by doing literature research and produce an engaging video to discuss their research question. Although this was an interesting assignment, it had the drawback that it did not blend in well with the remaining course contents.

In another exercise that I conducted last year, I ask students to propose a hypothesis to a research question based on a scientific talk we watched during class. This activity was less successful. Coming up with good scientific hypotheses proved to be difficult for students, due to a lack of scientific knowledge and awareness of what a good hypothesis looks like.

Generating a good research question requires less scientific knowledge than proposing a specific hypothesis. Nonetheless, some theoretical foundations are helpful.

In particular, one of my goals for the coming semester is for students to appreciate different types of research questions and recognise what makes a good research question. As such, it will be useful to discuss examples of good research questions, as well as research questions that are less good.

According to my own categorisation, research questions (or research objectives) can fall into different groups.

At the most basic level, they address a gap in our current knowledge, for instance by trying to identify the mechanism underlying a phenomenon, characterise a missing link in a given pathway, address the physiological significance of a novel mechanism, or develop drugs against a newly identified drug target.

At a more complex level, a research question draws connections to other areas or considers the bigger picture.

At the most advanced level, a research question is based on uncovering hidden assumptions, recognising previously unnoticed patterns, often by removing commonly assumed constraints. These advanced research questions can take the form of questions aimed at identifying general principles, or of “What-if” questions of potentially broad significance that can lead to scientific breakthroughs.

A leading proponent of “What-if” questions is Noubar Afeyan, co-founder and chairman of Moderna and founder and CEO of Flagship Pioneering. In 2010, he and his colleagues at Flagship Pioneering asked the question “Could mRNA be a drug?”. To pursue this question, Moderna was founded, ultimately leading to the development of game-changing mRNA vaccines during the COVID-19 pandemic.

Amazing scientist, inventor, CEO, and entrepreneur Noubar Afeyan

The research towards such goals often involves the development of new technologies, which enables researchers to ask new questions that could not be answered previously.

As highlighted above, it will be crucial to explain to students the different types of research questions and illustrate them using examples. This is probably best achieved in the form of a pre-lecture video. This could then be followed by an in-class activity that.

To emphasise essential elements of brainstorming, I plan to initially let the student groups come up and submit a minimum number of research question ideas. Subsequently, student groups could be tasked to rank the questions according to how interesting they are, followed by asking ChatGPT to also rank them and provide feedback on all questions. Finally, students choose and refine the question that their group feels most excited about and explain their final choice.

Finally, a reflection from one of my students during the last semester made me think. The student shared with me his thoughts about becoming an interesting and thoughtful person who is able to articulate his ideas and opinions well.

Like the student, I am often impressed by how well others are able to express themselves. I suspect that most of the amazing presenters I encounter have actively worked on their communication skills through practice and learning from failure.

But as my student also pointed out, in order to be a good communicator, a crucial first step is having one’s own opinions and insights.

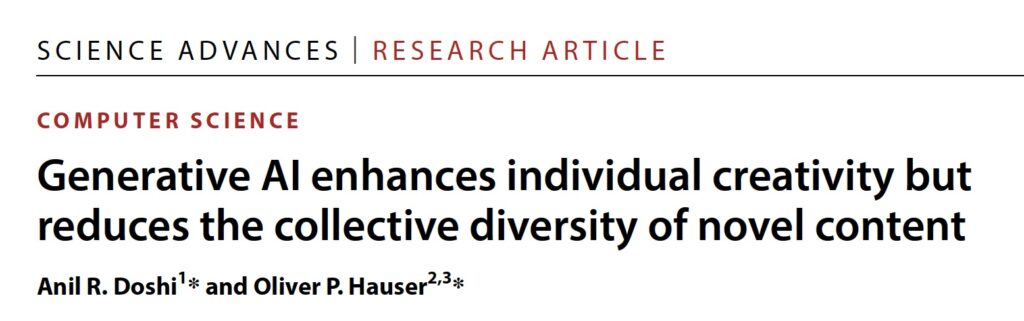

Indeed, when I read or listen to content created by others, what impresses me most are interesting and unique ideas, opinions and insights. The only way to arrive at individual ideas, opinions and insights is by seeking new experiences and reflecting on them.

In the age of AI, standing out as an individual, by having one’s own opinions and original ideas and by being able to communicate these effectively, is more important than ever.

As such, I want to continue the practice of reflection assignments, for instance by focussing on the importance of not only being a consumer, but also a creator of content, in the broadest sense of the word. Creating content, i.e. actions, products or ideas that are of value to others, be it our family and friends or the society at large, can be considered one important meaning of life. The earlier we start doing so, the better will we become at it.

However, having ideas and being able to communicate them is unlikely enough. Success will ultimately depend on another factor, our personal attributes, e.g. to what extent we are able to put our ideas into practice and overcome difficulties that we are struggling with.

As such, topics that I feel are important for students to reflect on are

-

to what extent they are not only learning and consuming, but creating content, and

-

how students have managed or plan to overcome difficulties they are facing.

HIGHLIGHTS FOR WEEK OF 24 – 30 NOVEMBER

Podcasts

Recently, I have listened to a number of amazing podcasts that had some common theme. First, there was the Rest Is Politics Leading Interview with playwright Graham Green and the main character and actor in his play “The Punch”, Jacob Dunne. Jacob punched someone as a young man, and the victim fell and died as a result of it.

The play tracks how Jacob took responsibility for his action by seeking forgiveness from the victim’s parents. This process of restorative justice, where the victim tries to repair harm caused by his or her criminal behaviour, is known to help both offenders and victims, or their relatives, move on and progress in their lives. Yet, in most current prison systems, options for offenders and victims to engage in restorative justice are severely limited.

Then there was the BBC Documentary “Raising Children on a warming planet”, in which I learned about how an airline pilot and his climate activist son, despite seemingly being on opposite ends of the climate change debate, managed to find common ground and develop empathy for each other.

And finally, there was Natalie Kitroeff’s emotional New York Times “The Daily” podcast, entitled “Parenting a trans kid in Trump’s America”.

This podcast told the moving story of two parents, living in the US southern state of Tennessee, and one of their children, which seemed different from their other three kids. From early childhood, the child appeared sad and withdrawn and exhibited many unusual behaviours, such as not acting in a way a boy was expected to and instead, being excited about things that girls typically like.

It took the mom until their son had turned 14 until she finally started reading on the internet about gender dysphoria, a condition where children display abnormal behaviours and psychological distress because of a mismatch between their biological sex and their gender identity. She decided to talk to her child about it. Hearing about this conversation, the mom’s and her husband’s determination to support the child, and the child suddenly becoming a happy girl was very moving.

However, all this happened in the background of US President Donald Trump’s second term in office and his administration’s denial of the existence of transgender identity and worse, its efforts to prevent any minors from undergoing hormonal gender-affirming medical care. As a consequence, all doctors in the state of Tennessee who previously carried out this type of treatment were no longer able to. In addition to failing to find a doctor who could give their child the therapy that the parents felt it needed, the new radical policies caused changes in the attitude of the public and their friends, and worries that schools would not protect transgender children. All this was heartbreaking.

Eventually, the whole family moved north to a blue, Democrat state, and a school that could guarantee their child’s safety. But even there, the consequences of the Trump regime’s policies caught up on them. At one point, the father even contemplated moving to a different country.

One thing that these stories made me realise is how quick I myself tend to judge other people’s behaviour. Rory Stewart, co-host of the Rest is Politics, pointed out in one of his podcasts how easy and common it is to take a moral high ground and condemn people for their behaviour, when most of us probably also sometimes do things that we do not want other people to know about. I certainly do.

Most people also rarely ask themselves how they would have reacted in the situation others find themselves in, or even what it may be like to be experiencing what others do. If we were in the shoes of other people, would we have the courage to take action or act differently? How often do we ourselves speak up if we encounter things that are not right? How often do we express empathy and offer help when other people need it?

There is another aspect of empathy and helping others that I have recently been thinking about: recognition.

I sometimes feel a tendency to expect recognition from others, especially if I invest much in trying to help others.

Where does this come from? It may be different for everyone. For me, it is certainly not a need to be admired. Instead, I feel a sense of unfairness when I am not recognised despite doing things better than others. Because I feel a strong sense of fairness, I keenly acknowledge other people’s success, and feel uncomfortable about praise I receive that I feel is not justified.

I have always been most inspired by people who do not value any credit for what they do. Since I started this post with podcasts, I have gotten into the habit of listening to the great Sherlock Holmes podcasts from Noiser. What has impressed me is that the great detective usually wants to remain unknown as the person who solved the crime, and that uncovering a case is all the satisfaction he needs.

Of course, there are many inspiring real characters who like Sherlock Holmes do not seek credit for what they are doing. Yet, despite not seeking recognition, many of these characters are well recognised. But the reason why they achieved their reputation is because they started out being undeterred by a lack of recognition, because recognition was not something that they ever cared about.

HIGHLIGHTS FOR WEEK OF 17 – 23 NOVEMBER

Learning from failure

This week, I gave an invited talk in our school for graduate students on how to prepare the research proposal and oral presentation for the students’ PhD Qualifying Exam. I felt genuinely honoured for being invited once again to address this topic. I was also excited about the opportunity, especially because I had a lot of tips and advice I wanted to share.

However, as the day approached, I realised that I probably had too many things to share within the time allocated for my talk. So I did what I always advise my students not to do: cram in everything and present as fast as possible. It is indeed interesting how often I do not follow the advice I give to others.

The reason why I tried to cover everything was the same reason why many students include too much content in their presentations: I did not know what to omit because I felt that everything was important.

The main reason why I am able to help students with their presentations is that I have an informed outsider perspective. Hence, I am able to consider which points are important for the audience and which are not. Therefore, the answer to my dilemma would have been simple: I should have asked someone what I could omit.

Instead, I ended up racing through my points, which likely made it difficult for students to follow, especially foreign students.

Being under time pressure also has negative effects on me as a presenter. It makes me stressed and focused on finishing my presentation on time, instead of concentrating on the audience and ensuring that the students follow my train of thought and enjoy the presentation. Indeed, my best lectures are those where I do have time to cover my points and can afford to interact with students extensively.

So at least, the experience helped me to (re)learn an important lesson. After all, success does not come from avoiding failure, but from overcoming it.

HIGHLIGHTS FOR WEEK OF 10 – 16 NOVEMBER

The Key Podcast: An English Professor Embracing AI

One of the areas that has been most affected by the emergence of generative AI is non-fiction writing. People are using chatbots to come up with ideas for articles, to improve their writing, or to do all the writing for them. Naturally, this is especially true in universities. It is hard to imagine that there are many students who do not use chatbots to help them with their writing assignments.

As such, I have with great interest listened to a podcast discussing the new Authorship AI tool from Grammarly. The podcast featured a guest, Jenny Maxwell, representing the company behind Grammarly Authorship, as well as one university lecturer, Jenny Billings, who has been using the application in her teaching.

Two of the lecturer’s opening remarks resonated with me greatly. Firstly, the lecturer expressed that she feels a moral responsibility towards students to incorporate AI into her teaching.and help students prepare for a future in which AI is abundant. Secondly, she pointed out that students expect to be able to use AI tools in their high stakes experiences, such as exams.

With regard to the second comment, I agree that students’ expectations to use AI tools in their assignments and assessments is perfectly reasonably, given that in the real world they are unlikely to carry out similar tasks without using AI. This is indeed why Grammarly developed its Authorship AI tool.

What is Grammarly Authorship?

Grammarly Authorship can be used in Microsoft Word or Google docs by installing the Grammarly application. When students write with Grammarly Authorship, text that is written by the students themselves, created with AI (i.e. copied and pasted into the document from a chatbot), copied from another website, or edited with Grammarly, is automatically labeled. When students, at the end of their writing process, submit their assay, they can share their report with the lecturer. The lecturer is thus able to see what students wrote by themselves, as well as the sources from which they copied content.

Grammarly Authorship also keeps track of the time students take to write an essay. Finally, it records the writing process and the evolution of the essay. If the students share the writing replay with their lecturer, the instructor can literally watch how the students composed their essay.

The two podcast guests highlighted various positive outcomes of using Grammarly Authorship:

The tool can help students become aware of how they engage with AI tools.

Students may feel more assured of being credited for their own contributions.

Because students get to see their report before submitting it, they can decide to increase their own input if they feel they have copied too much from other sources, before handing in their assignment.

Grammarly is also planning to incorporate new elements in the near future, such as a reader reaction option, which gives authors an idea of what potential readers think of the essay. Another planned tool is a virtual export panel providing feedback on written pieces.

While all this sounds exciting, there are also critical voices among many instructors.

The first concern is a very practical one. Students could simply use another device to copy AI content by reading off the other device and typing the content manually. This may lead to anomalies in their “writing report” (faster than expected text generation, lack of editing). But nonetheless, this type of cheating is difficult to prove.

What this shows once again is that whatever measures instructors take to prevent cheating, students usually find ways around them.

The approach used by Grammarly Authorship is called process tracking. An insightful analysis by the Modern Language Association Task Force on AI in Research and Teaching” raised a number of additional concerns about the process tracking approach of dealing with AI.

Howard Community College Professor Kofi Adisa highlights that by tracking the process, we disrupt students’ creative writing process. He points out that for him, writing is an individual process: draft something, correct it, or sometimes delete it and start all over. He writes that “if I knew as a student, I had to share my process or worse to see that it was being tracked and that information was somehow in the purview of my professor, I probably would be too self-conscious and worried that my process was judging my writing.”

I especially like the criterion he uses for choosing his assessment conditions.

“As a professor, I never ask students to do something for the class that I couldn’t do if I were a student.”

I personally would certainly not want to share the process of drafting my posts. Not only do my initial drafts look very immature, I often draft ideas that I do not want others to see, and I often start out with much more extreme or entirely different opinions compared what I share in the final product. After all, the writing itself is an idea generation process, which often is quite personal.

Another author, Prof. Leonardo Flores from Appalachian State University, makes some additional excellent points. He points out that tools like Grammarly Authorship are essentially built with mistrust as a baseline. As such, they are likely to be viewed by students as surveillance tool. This highlights another motivation for letting students use AI tools freely beyond testing them under real world conditions: to build trust.

Leonardo Flores has a second concern. He worries that process tracking “can and will be used by educators to avoid updating their pedagogical practices to account for the impact of generative AI. Like AI detection software, it is simply another weapon in an AI arms race. … Both AI detection and process tracking can be easily circumvented, but the harm they do to writing practices and pedagogical situations are lasting.”

As such, my personal answer is not tracking whether and how students have used AI, but instead requiring that they use generative AI tools to solve tasks or to reflect on how it has helped them.

HIGHLIGHTS FOR WEEK OF 3 – 9 NOVEMBER

Mitochondrial uncouplers

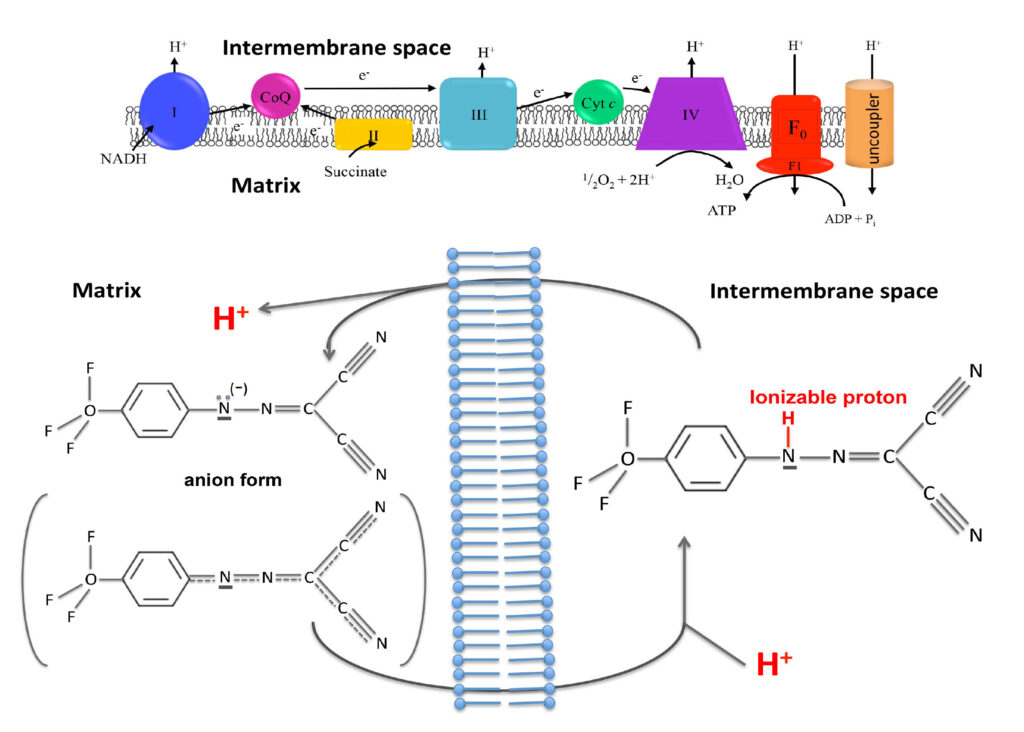

One topic we have researched on in recent years and recently published two papers about are mitochondrial uncouplers. These compounds function by increasing energy expenditure by causing our mitochondria to continuously burn fuels derived from sugar and fat. As a result, uncouplers are able to induce substantial weight loss.

On a biochemical level, uncouplers dissipate the proton gradient across the inner mitochondrial membrane. When our mitochondria burn fuel derived from carbohydrates and fats, they convert the released energy into a proton gradient across the inner membrane of our mitochondria, a cellular organelle often referred to as the powerhouse of the cell. Mitochondria then utilise this proton gradient to synthesise ATP, the universal energy currency in our body. Energy-rich ATP is synthesised from its energy-poor precursor ADP via addition of a phosphate group.

The generated ATP can then be used to drive all energy-dependent cellular functions, such as muscle contractions, protein synthesis and brain activity. In this process, ATP is converted back to ADP, and the cycle of ATP synthesis in the mitochondria and ATP utilisation in the rest of the cell continues.

One important concept required to understand the mechanisms of uncoupler compounds is the coupling of fuel oxidation to the availability of ADP. When cells do little work, there is little ADP produced through energy requiring processes. Under this condition of low ADP availability, the proton gradient across the mitochondria is not utilised to produce ATP and consequently becomes very high. A high proton gradient in turn slows down fuel oxidation because the mitochondria need to pump out protons against an increased gradient.

One could compare this to pushing a trolley up a slope. The steeper the slope (i.e., the height gradient), the more difficult it becomes to push up the trolley.

Thus, when little ADP is available and the proton gradient accumulates, fuel oxidation slows down because the energy derived from burning the fuel is not enough to pump out more protons.

Uncouplers function by providing an alternative route for protons to pass through the inner mitochondrial membrane, without producing ATP. It is like if in an hydraulic power plant we provide alternative channels for water to pass through the dam while bypassing the hydraulic turbines. Water will continuously flow without producing any electrical energy.

In mitochondria, uncouplers dissipate the proton gradient by shuttling the protons from the outside to the inside of the inner mitochondrial membrane. This creates a futile cycle where fuels are oxidised to create a proton gradient, which is then immediately dissipated. As a consequence, fuels are continuously burnt, resulting in weight loss.

Uncoupler compounds achieve this feat by having two important properties. First, they can exist in both a proton-bound and a proton-less state. Second, uncouplers are membrane-permeable in both of these states.

One could argue that with the discovery of new blockbuster incretin anti-obesity drugs, such as the GLP-1 agonist semaglutide (e.g. ozempic) and the dual GLP-1 and GIP agonist tirzepatide, the weight loss problem has been solved. These drugs indeed cause amazing weight loss within a short time spans.

What is more, they come with few side effects. The most common side effects are gastrointestinal symptoms, which 10% of patients taking semiglutide experience. With tirzepatide, the percentage of gastrointestinal side effects is lowered to approximately 5%.

However, after discontinuing these drugs, most patients regain two thirds of the weight they have lost while on the drug. Even if patients continue taking the drug, the weight loss effect tends to plateau after about a year. In other words, patients become tolerant to the drug.

While it is likely to be difficult for the body to develop tolerance to uncouplers, these compounds have their own set of side effects. They fall into two categories.

The first is on-targets effects. While uncouplers increase fuel oxidation, they do so at the cost of mitochondrial ATP production and therefore cause an energy deficit. This can cause dangerous complications in high energy dependent organs such as the heart if the uncoupler is not well dosed.

The proton gradient across the inner mitochondrial membrane stores potential energy, which is normally used to drive the synthesis of ATP. When mitochondria dissipate this proton gradient, the energy that is not utilised to synthesise ATP is instead released as heat. Hence, another common side effect of uncouplers is, potentially excessive, sweating.

The second type of side effects are off-target effects on unrelated cellular processes, which are common to most drugs. As such, one of the objectives in drug discovery is to develop compounds with the highest possible selectivity towards the intended target.

Common off-target effects of uncoupler compounds include dissipation of the proton gradient in other cellular membranes, and an inhibitory effect on the electron transport chain. In addition, the uncoupler compound niclosamide, which we have been particularly interested in, causes DNA damage.

In order to limit the unwanted effects on uncoupler drugs, researchers have pursued different approaches, for instance by developing an orally administered, controlled-release formulation of the uncoupler 2,4-dinitrophenol. This formulation consists of polymers that form a coating around the uncoupler drug. The polymer coating contains small pores, through which the uncoupler is slowly released.

As a result, the uncoupler is present in the human body at low but steady concentrations that still exert a therapeutic effect. This avoids high peak drug concentrations that ensue if the uncoupler is administered as a pure drug. Indeed, the researchers found that the controlled release uncoupler formulation has an LD50, i.e. the dose at which a compound is lethal for 50% of tested animals, that is more than 10-fold higher than that of pure 2,4-dinitrophenol.

Another potential approach to limit side effects is to target uncouplers to specific tissues. One very successful example is the development of an uncoupler prodrug. This prodrug is normally inactive, but is being converted to an active uncoupler by drug-metabolising cytochrome P450 enzymes. These enzymes are only expressed in the liver. Therefore, the active uncoupler only accumulates in liver tissue, thus avoiding on-target and off-target side effects in other tissues.

The uncoupler prodrug was shown by the researchers to be a remarkably potent and safe therapy for fatty liver disease, by promoting the oxidation of fatty acids in liver cells.

Notably, fatty liver disease is a major public health problem worldwide, including in Singapore. As I have recently learned from a newsletter published by NUS Medical School, the prevalence of non-alcoholic fatty liver disease (NAFLD) in Singapore in 2019 was estimated at approximately 1,492,000, out of a total population of 5.7 million (of which approximately one third are mostly young foreign workers who are less likely to have fatty liver disease). That would mean that approximately one in three Singaporeans has fatty liver disease. What is more, the incidence of Fatty liver disease in Singapore is expected to increase to 1,799,000 by 2030.

In Singapore, rates of type II diabetes also continue to rise, with more than 400,000 people currently living with the disease. This number is projected to reach one million by 2050.

Finally, there is obesity, a major risk factor for diabetes. Obesity shows an upward trend in Singapore as well. When using the recommended Asian Body Mass Index cut-offs (which are lower than the thresholds for Western populations), in 2020, 38% of the Singapore population was overweight and 21% obese. As such, people with a body weight in the normal range are currently in the minority in Singapore.

This then brings me back to our own research studies, which were aimed at finding ways to circumvent the side effects of uncouplers and understand the structural features responsible for the side effects.

In our first study, we tried to target uncouplers specifically to adipose tissue, thus potentially circumventing systemic side effects of these drugs.

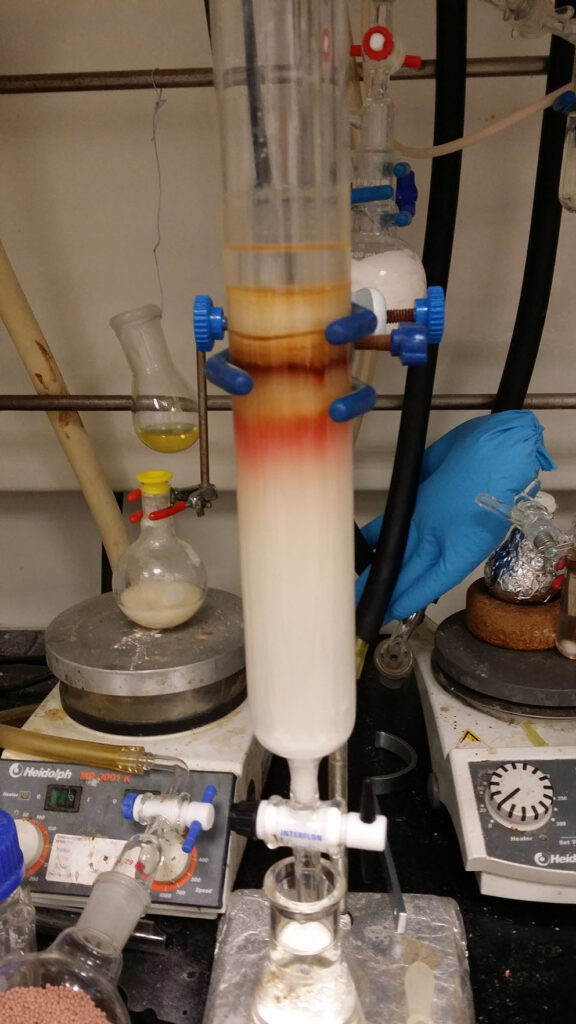

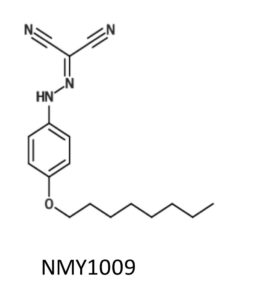

Many lipophilic compounds (i.e. compounds that mix well with lipids) have been found to selectively accumulate in adipose tissue. Hence, we explored the feasibility of conjugating uncoupler compounds with lipophilic groups. These compounds were synthesised by my former PhD student Mei Ying in the laboratory of Prof. Tan Choon Hong at NTU.

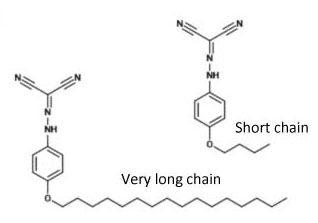

Mei Ying used hydrocarbon chains of different length, that were linked to the uncoupler compound FCCP via an ether bond. When we explored their uncoupling activity and found that a chain length of four or eight carbons had the highest activity. The activity markedly decreased with a 12 carbon chain, likely because of limited water solubility of the compound.

Hence, we decided to investigate the functional uncoupler conjugated with the longest hydrocarbon chain that still exhibited good activity, i.e. the 8-carbon chain conjugated uncoupler. We referred this compound as NMY1009, in line with the convention of labelling novel compounds with the initials of the person who synthesised them.

After characterising the compound in cells and isolated mitochondria, Mei Ying went to the laboratory of Toni Puig-Vidal at the University of Cambridge to test the compound in mice. In Cambridge, Mei Ying worked with a very experienced and dedicated senior postdoc, Sergio Rodriguez, who guided Mei Ying and led many experiments to advance the work.

To test the effect of NMY1009 on weight gain and metabolic parameters, mice were placed on a high fat diet. However, disappointingly, NMY1009 did not elicit therapeutic weight-lowering or blood glucose lowering effects in mice.

Through another collaboration with Gopal Venkatesan and Giorgia Pastorin from the Department of Pharmacy at NUS, we eventually found out why. NMY1009 was metabolically unstable. Thus, the ether bond between the uncoupler and the hydrocarbon chain was rapidly cleaved in aqueous solution.

Yet another collaborator, Marcella Bassetto from Cardiff University, synthesised a lipophilic version of another uncoupler compound related to 2,4-dinitrophenol, which I discussed at the beginning of the post. The dinitrophenol analog carrying an 8-carbon hydrocarbon chain, which was also conjugated via an ether bond, had an even higher uncoupling activity compared to its parent compound. However, like NMY1009, the lipohilic dinitrophenol analog was also highly unstable in vivo when administered to mice.

Therefore, although conjugation of a hydrophobic hydrocarbon chain to uncoupler compounds resulted in sustained or improved uncoupling activity, linking lipophilic hydrocarbon chains via ether bonds leads to metabolic instability. This was a rather unexpected result. It taught us the lesson that in the future it would be necessary to conjugate lipophilic groups via other chemical bonds.

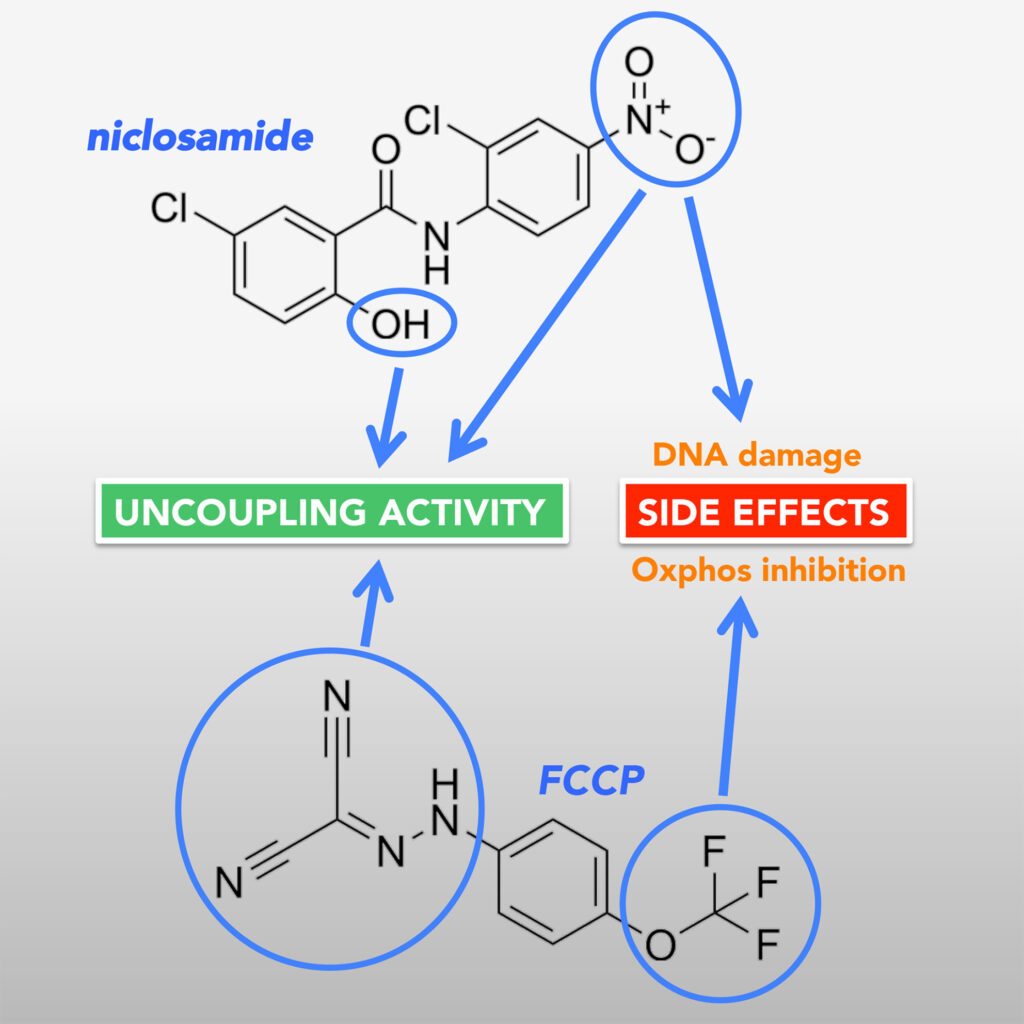

The goal of our second study was to find out which structural features in two of the most commonly used uncouplers, niclosamide and FCCP, are responsible for their side effects. As mentioned above, niclosamide causes DNA damage, while FCCP causes an inhibitory effect on the electron transport chain.

To carry out our study, our collaborator Marcella from Cardiff synthesised a number of niclosamide analogues. We also used some FCCP analogues that were synthesised by Mei Ying.

With the help of these compounds, we managed to obtain some interesting insights. By comparing the structural features and activities of the different analogs, we identified FCCP analogues that do not inhibit mitochondrial oxygen consumption but still provided good, although less potent, uncoupling activity. For niclosamide, we identified the two functional groups responsible for the uncoupling activity of the compound and characterised the role that these groups play. We also deduced that a nitro group that is crucial for exerting DNA damage, is also critical for promoting high uncoupling activity.

These structural investigations provide important information that could aid further drug development. For instance, given the critical role of the niclosamide nitro group in both uncoupling and DNA damage, it may be possible to substitute this group with other functional groups that exert critical electron-withdrawing properties to promote uncoupling without causing DNA damage.

Reassuringly, a recent publication by another group has confirmed our findings with niclosamide.

There is indeed much interest in niclosamide because the compound is an FDA-approved drug that is currently in clinical use to treat parasitic tapeworm infections. In recent years, niclosamide has been considered for use as a repurposed drug in the treatment of various diseases, including diabetes, fatty liver disease and cancer.

Notably, apart from uncoupling mitochondria and causing genotoxic stress, niclosamide has also been shown to be a potent inhibitor of the mammalian (or sometimes also called ‘mechanistic’) target of rapamycin complex I (mTORC1 in short).

mTORC1 is a protein kinase complex that modifies various substrate proteins through phosphorylation. It is one of the most important kinases in our cells and one, if not the, most intricately regulated cellular kinases.

One of the mTORC1 phosphorylation targets is a transcription factor called TFEB (short for Transcription Factor EB), which is responsible for inducing the production of more lysosomes. Lysosomes are cellular organelles responsible for digesting damaged macromolecules or parts of the cells and in this process, generate nutrients during times of starvation.

Interestingly, we recently found that niclosamide induces a dramatic translocation of TFEB into the nucleus. Although this may be simply a consequence of mTORC1 inhibition by niclosamide, there is recent evidence that TFEB nuclear translocation is physiologically independent of mTORC1 activity, but instead dependent on regulatory complexes that are upstream of mTORC1.

As such, we are currently investigating the mechanism through which niclosamide regulates TFEB and why the effect is specific to niclosamide and not observed with other compounds that disrupt mitochondrial function.

I am happy that our paper on niclosamide has already been cited around ten times within the first year of its publication, and that, as mentioned above, a recent paper has confirmed many of our results (and acknowledged our study). As such, I was taken by surprise when recently I received an email from our faculty telling me that because our paper was not published in the school-approved list of scientific journals, I might be penalised, instead of being acknowledged, for the published paper.

I in fact did not check whether the journal in which we published our paper, an open access journal called FebsOpenBio, is part of the approved list of journals. However, given that our university has a publishing agreement with FebsOpenBio, which covers the open access publishing fee for NUS researchers, it did not occur to me that our school would not allow us to publish in this journal.

A major reason why our school came up with an approved list of journals is a metric used to measure research performance by universities, the so-called the Field weighted citation impact (FWCI). The FWCI compares the number of times a publication is cited to the average number of citations for similar publications. Here, “similar” publications relates to the publication year, the publication type (e.g. a original research article or a review paper), and the subject area, all of which are known to affect the citation rates.

Based on this definition, publishing papers that do not attract many citations will lower the FWCI of an institution. Thus, given that lower ranked scientific journals are less likely to produce many citations (as the ranking is based on the average citation rate of papers in a journal), it makes sense to discourage authors to publish in journals with low rankings. As such, the idea of coming up with and approved journal list seems like a well-intentioned measure.

However, there are in my opinion also problems with this approach.

The FWCI was intended to de-emphasize the obsession of academic institutions with journal rankings (which are based on s-called Journal Impact Factors). Evaluating a publication based on the impact factor of the journal in which the paper has been published can be very misleading because the citation rate of an individual paper could be very different from the average number of citations of papers published in the same journal.

In contrast to the journal impact factor, the FWCI only considers the citation rate of individual papers.

However, defining a list of approved journals primarily based on impact factor-based journal rankings defeats the very reason why the FWCI was developed.

Secondly, the policy encourages researchers to publish more comprehensive and potentially more impactful paper. This is potentially a good thing, given the vast amount of papers that is published and the large number of papers that go unnoticed. However, the reason why so many papers go unnoticed is in my opinion not because too many papers are published, but because most papers do not answer relevant scientific questions.

What the white list policy also does is make it more difficult for undergraduates to publish a first author paper. Publishing a paper helps undergraduates succeed in securing scholarships and other opportunities. In fact, without having published a couple of papers as an undergraduate, I likely would not have been able to pursue a research career. Moreover, publishing a paper is also a strong motivating force and an immensely useful learning experience for undergraduates.

Luckily, it is possible to ask our Head of Department for approval to publish a paper in a non-white listed journal, which I will be sure to try the next time around.

HIGHLIGHTS FOR WEEK OF 27 OCTOBER – 2 NOVEMBER

Two weeks ago I posted one of two research updates that I wrote back in 2020 and never published. Here is the second one:

Sensing Sugar

In this post, I would like to discuss an research paper that made some surprising discovery about how we sense sugar. The article starts out with the astonishing fact that the average American consumes more than 45kg of sugar per year. The paper then investigates how we sense sugar. And equally astonishingly, it is not (only) via the mouth.

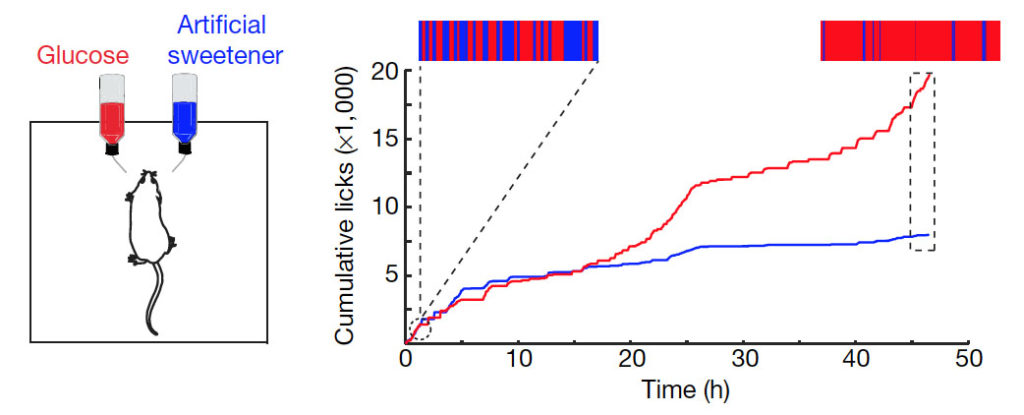

According to common knowledge, sweet compounds are detected by taste receptors on the tongue and palate epithelium. These taste receptors also sense artificial sweeteners, which were introduced more than four decades ago. However, when given to mice, artificial sweeteners are not as rewarding as real sugars in long-term choice tests.

This suggested that perhaps something is different about sensing of real sugar and artificial sweeteners. Indeed, it has been previously observed that mice lacking the ability to taste sweet compounds still develop a preference for sugar. And this sweet taste receptor-independent craving for sweet food is only elicited by real sugar, but not by artificial sweeteners.

The paper began with an interesting experiment, in which the researchers exposed mice to either sugar solution or artificial sweetener (acesulfame K) solution. Initially, the mice showed no preference. However, after half a day the mice started to switch to the sugar solution, eventually almost exclusively drinking the water containing glucose. The same response also happened in mice that lacked sweet receptors on their tongue and palate. These results hence suggested that sugar, but not artificial sweetener, can be sensed after ingestion via the mouth.

Sugar activates a gut–brain sugar sensing axis. Left figure: Mice were allowed to choose between 600 mM glucose and 30 mM acesulfame K artificial sweetener. Preference was tracked by electronic lick counters, and the experimental results are presented in the right figure: The bars on top of the figure illustrate the lick frequency for glucose (red) versus artificial sweetener (blue) during the first and last 2,000 licks of the behavioural test. The results show that initially the mice have no preference, but by 48 h a clear preference for glucose over artificial sweeteners can be observed.

The researchers hypothesized that sugar may be detected by specific sensory neurons in the gut and stimulate particular brain regions that mediate sugar preference. Thus, the researchers tried to identify neurons that become activated when giving mice glucose. They did this by staining sections of the brain to detect increased expression of the transcription factor c-fos, a marker of neuronal activation. They found that a region called the caudal nucleus of the solitary tract (cNST) becomes activated upon ingestion of sugar, but not artificial sweetener or water. Importantly, the cNST brain region also became activated when the sugar (but not sweetener) solution was infused directly into the stomach, bypassing taste receptors in the mouth.

Detection of glucose in the gut leads to the activation of the caudal nucleus of the solitary tract (cNST) in the brain.

The researchers then hypothesized that the signal from the gut to the brain is transduced via the vagal nerve. To prove this, they performed a rather complicated experiment. They monitored the activation of the cNST region in the brain via a techniques called fibre photometry. They expressed in the cNST neurons a genetically encoded fluorescent sensor protein that becomes fluorescent when the calcium concentration in the neuron increases. An increase in the cellular calcium concentration indicates activation of the neuron. To detect the increased fluorescence, they implanted an optical fibre into the brain near the cNST region. When they then delivered glucose into the intestine (duodenum) via a catheter, they observed that the fluorescence in the excitatory cNST neurons increased. Importantly, this response was absent in mice with disrupted vagal nerves. This confirmed the presence of a gut-brain sugar sensing axis that is mediated by the vagus nerve.

In another complex experiment, the researchers showed that the neurons in the cNST region of the brain are required for the sugar preference. Showing that something is required usually involves removing it and seeing if the effect is still there. Hence, to determine if the neurons are required for sugar preference, the researchers removed these neurons and determined if the mice loose the preference for sugar. To specifically remove the cNST neurons, the authors expressed the tetanus toxin in these cells, which leads to cell death. The DNA encoding the tetanus toxin was delivered into the cNST region via Adeno-associated virus (AAV), which was injected into the specific brain region. However, expression of the tetanus toxin required recombination of the coding DNA sequence, which was mediated by a Cre recombinase. The Cre recombinase coding sequence was placed downstream of a promoter that becomes activated by c-fos (a transcription factors that become activated upon neuronal excitation). Hence, in the actual experiment, the virus was injected into the brain region, and then the mice were given sugar water. This led to the activation of the cNST neurons, activation of the c-fos transcription factor, expression of Cre recombinase, recombination of the tetanus toxin coding sequence and subsequent expression of tetanus toxin in these neurons. This resulted in death of the sugar activated neurons.

When subsequently the researchers gave the mice glucose again, a preference for glucose was no longer observed, showing that the cNST neurons are indeed necessary for sugar sensing in the gut – a rather complicated experiment to show that activation of cNST neurons is required for sugar preference. But nonetheless an important one, which may in the future even have therapeutic implications, because it suggests potential therapeutic targets to inhibit sugar craving in humans.

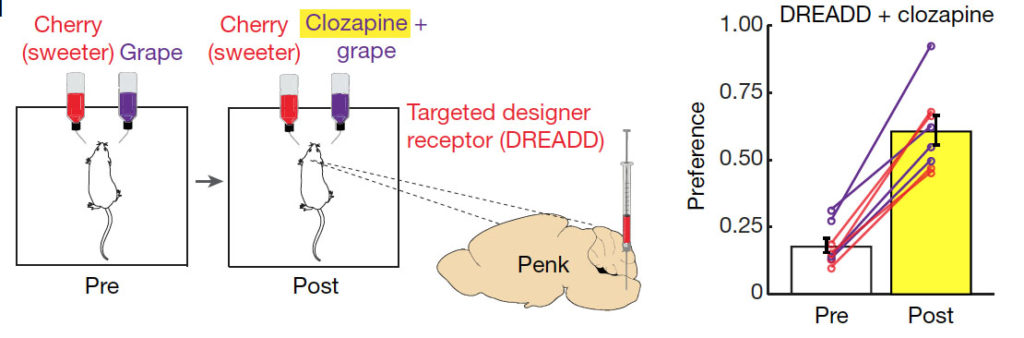

With all this knowledge, the researchers then tried to rewire the whole pathway and make mice crave a different stimulus, much in the spirit of physicist Richard Feynman, who said, “What I cannot create, I do not understand”. To do this, they first identified the exact neurons in the cNST brain region that mediate the sugar preference. This was accomplished by stimulating mice with sugar and then staining sections of the cNST region for the transcription factor c-fos (to label the neurons that became activated). The researchers found that the activated neurons are positive for expression of the specific neuronal marker protein proenkephalin. Hence, sugar activates specific neurons that express proenkephalin. The authors then expressed a synthetic “designer” receptor in these proenkephalin-positive neurons. The designer receptor responds to the drug clozapine, which binds to the receptor ligand binding domain on the cell surface. Binding of clozapine to this designer receptor then results in the activation of the neurons.

In the actual experiment, the researchers gave mice artificially sweetened cherry-flavoured and grape-flavoured solutions as drinking water. The grape solution was mush less sweet, but contained clozapine. What the researchers found was that after 48h the mice switched to the less sweet, but clozapine containing grape-flavored solution. This suggests that the clozapine, after ingestion and travel through the blood circulation, was able to activate the designer receptor expressing cNST neurons and mediate preference for grape-flavored solution. This confirms that activation of the specific cNST neurons was enough to create sugar preference. And the mice expressing the synthetic receptor for clozapine could in theory made to eat anything, as long as clozapine was added to the food.

In the experiment, a plasmid encoding for the clozapine designer receptor (called DREADD) was injected into the cNST area. The designer receptor was only expressed by glucose sensing proenkephalin neurons, which was achieved by using a similar approach as described above for the tetanus toxin (i.e., expression of the DREADD receptor required a recombination event, which was mediated by Cre-recombinase, which in turn was expressed from the proenkephalin gene promoter). Upon expression of the clozapine receptor in proenkephalin positive cells, the mice were then tested for their preference between two flavours for 48 h. Shown is an example using cherry-flavoured drink (containing 2 mM acesulfame K artificial sweetener) versus grape-flavoured drink( with 1 mM acesulfame K). After initially conditioning the mice (Pre), clozapine was added to the less-preferred flavour. This resulted in a switch in preference to the less sweet grape solution, which is shown in the diagram on the right. The preference for the less sweet grape solution was initially less than 0.5 and hence not preferred. After adding clozapine to the less sweet grape solution, the preference for this increased in all mice to above 0.5 (which means the mice licked the less sweet grape solution more often than the sweeter one).

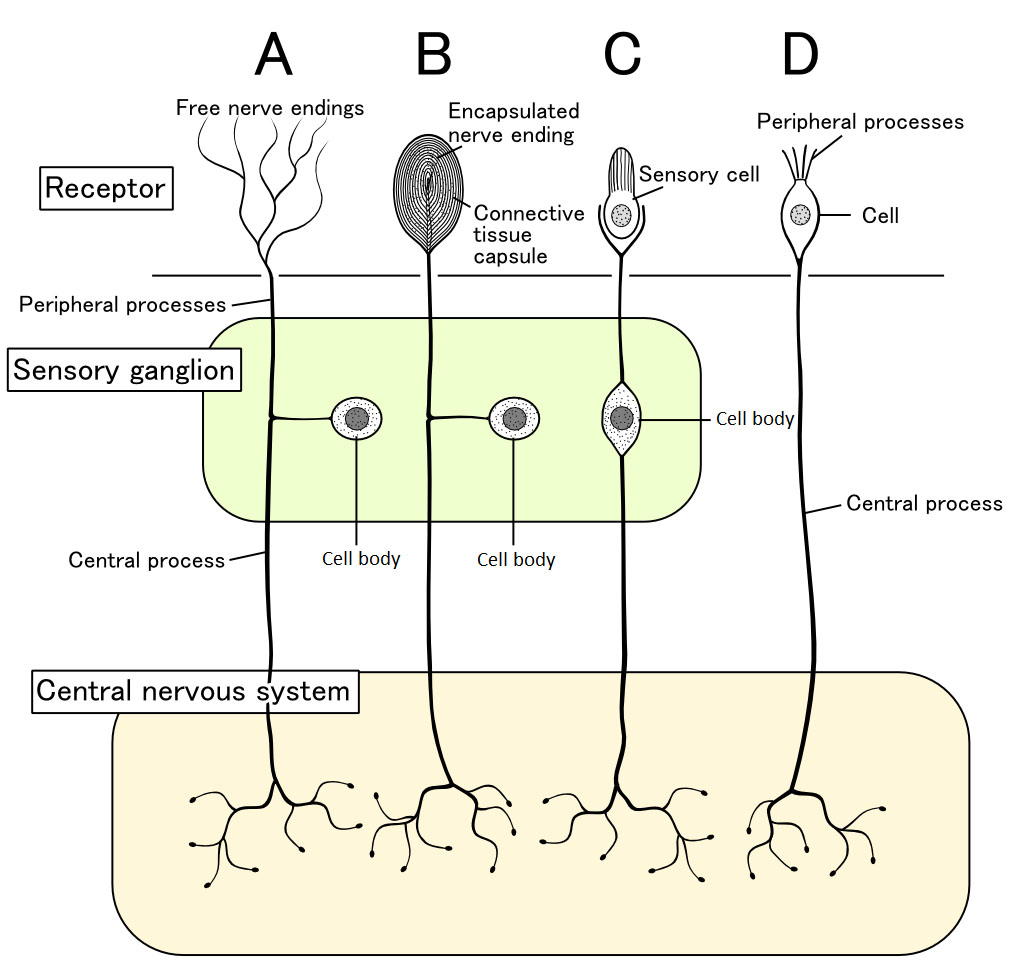

The study uncovered the entire neuronal pathway through which sugar preference is mediated. But it did not really clarify how the gut senses the glucose taken in by the mice. There are different ways through which the gut can sense external stimuli. There could be peripheral sensory cells in the gut mucosa. Alternatively, the sensory cells could be located further up and send sensory nerve endings to the stimulant site to detect specific external signals (see the diagram below taken from wikipedia).

In the case of glucose sensing in the gut, it seems likely that there must be sensory neurons in the mucosal wall of the intestine, which upon stimulation by glucose activate vagal nerve endings in the gut. As a first step to characterize the sensing mechanism, the authors have shown that glucose sensing in the gut is dependent on the main glucose transporter in our gut, SGLT1. Interestingly, the glucose analogue 3-O-methylglucose, which can also be taken up via SGLT1 but is not further metabolized in cells, can also stimulate the gut-brain sugar axis. This suggests that glucose metabolism is not necessary for the sensing mechanism. Also of note, fructose is not a substrate for SGLT1, and as expected, fructose does not activate this sugar sensing pathway. This is likely a reason why the combination of glucose and fructose (in table sugar or high-fructose corn syrup) is particularly harmful: Glucose mediates the craving and fructose, taken up by the gut by other sugar transporters, mediates the adverse effects via its metabolism in the liver.

In conclusion, the study has described a novel mechanism through which glucose can be sensed in our gut. This may have important implications. For instance, the study identified a number of potential targets in the gut-brain sugar sensing axis to develop drugs to inhibit sugar craving. The authors also suggest that “it may be possible to develop a new class of sweeteners that activate both the sweet-taste receptor in the tongue and the gut–brain axis, and consequently help to moderate the strong drive to consume sugar.”

HIGHLIGHTS FOR WEEK OF 20 – 26 OCTOBER

De Novo Protein Design from Natural Language

Recently, a number of papers have been published describing tools for de novo protein design based on large language models. These models can use text or keyword-based information, describing the desired function of a protein, to predict the amino acid sequences of novel proteins that fit this description.

One model with a highly impressive performance is Pinal, described in a recent pre-print publication by Dai et al. (2025). Pinal can design novel proteins based on natural language descriptions of the desired protein function.

As the authors point out, translating language input directly into sequence information is difficult. Therefore, the model takes a two-step approach. The natural language descriptions are first translated into structural information. To achieve this, the authors use discrete structure tokens that are generated by the “vector quantization method“. This method “works by dividing a large set of points (vectors) into groups having approximately the same number of points closest to them”, thereby selecting “a set of points to represent a larger set of points”.

Subsequently, the model converts this natural language-based structural information, together with the language description, into amino acid sequences. By using structure tokens as an intermediate representation, the authors substantially improved the performance of their protein prediction model.

To train their model, the researchers created a dataset named SwissProt-Aug, which consists of 4 million natural language–protein pairs. Thus, in SwissProt-Aug, functional protein descriptions of proteins are paired with their sequence, based on the Uniprot database.

However, this dataset was too small for training of the model. The authors also point out that relying solely on the Uniprot PDB database of well-characterised proteins for training the model results in protein predictions with good foldability and good alignment with the natural language instructions. However, the generated protein sequences exhibit a strong similarity to known proteins. In other words, the newly designed proteins display a low degree of novelty.

In contrast, the authors point out that “biologists are more interested in finding proteins that are structurally distinct from known proteins”. Therefore, the authors made use of “synthesized data”, i.e. proteins with structures predicted by the Alphafold neural network.

As such, the authors used the UniRef50 database, containing two orders of magnitude more proteins compared to the PDB database. The proteins in the UniRef50, however, lack highly accurate annotations. To functionally annotate these proteins, the researchers used a new protein database, named ProTrek to annotate these proteins.

ProTrek is a tri-modal protein language model that can derive textual information from both sequence and structural perspectives.

According to the Google AI summary, “ProTrek is a computer model that understands proteins by looking at three things: their amino acid sequence, structure, and function. It’s like a translator that can search for proteins by linking any of these three aspects together, allowing researchers to quickly find related proteins even if their shapes are different, but they perform a similar function.”

ProTrek can be queried via a web-based prompt, and according to Google AI, the model can answer a variety of search questions, such as:

“Find proteins with this function, even if their 3D shapes are different.” “Find proteins with a similar structure to this one, and see what their functions are.” “Find the function of this protein, given its sequence.”

By using the last functionality, the researchers who developed Pinal were able to functionally annotate the proteins from the UniRef50 database. This resulted in a total of 400 million natural language-protein pairs that could be used for training Pinal.

After training their model, the authors evaluate the foldability, language alignment and novelty of the predicted proteins. ESMfold, a protein language model that like AlphaFold, predicts 3D protein structures from amino acid sequence input, was used to assess foldability. To evaluate the language alignment, the researchers again used the ProTrek model. Finally, to evaluate the novelty of the designed proteins, the researchers compared their structure to known structures in the PDB database.

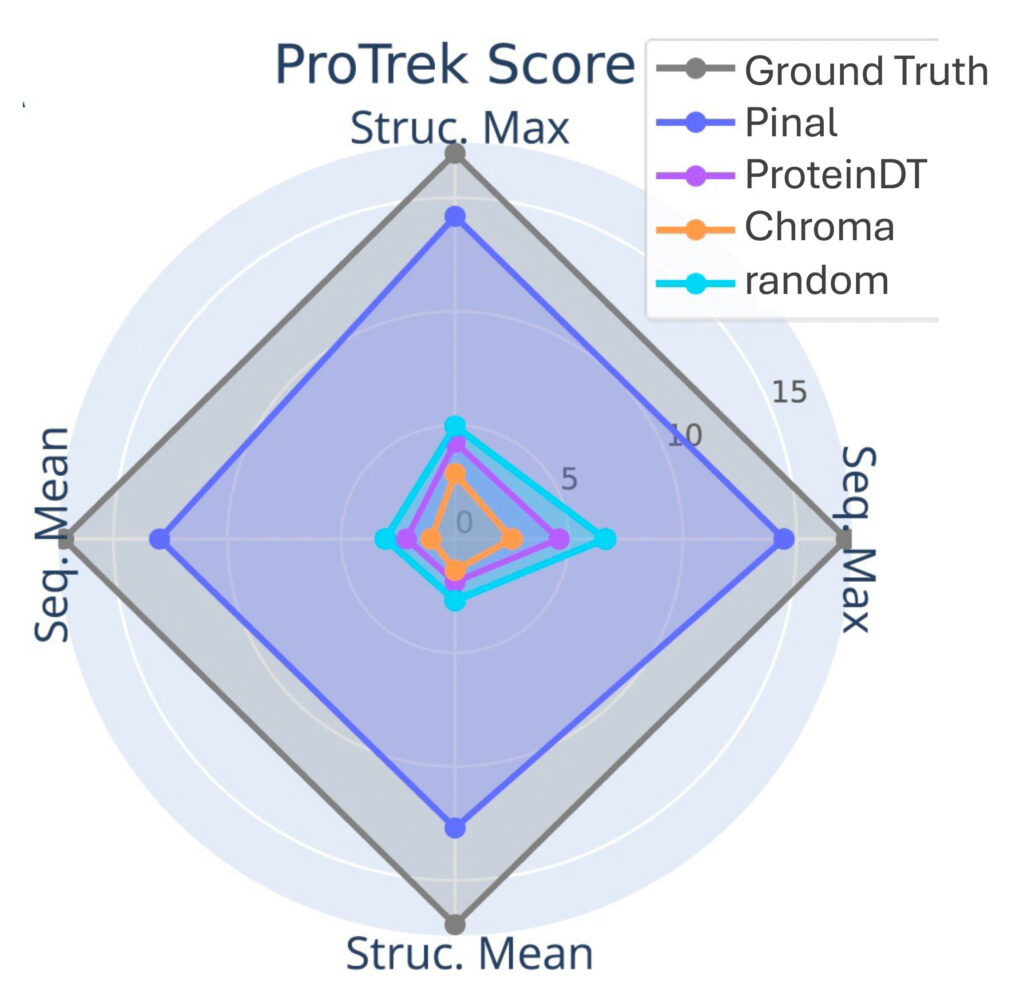

The authors then compared their Pinal protein design tool with other existing systems, using a test set of proteins with known function, sequence and structure. They compared it to ProteinDT and Chroma, protein design tools which, similarly to Pinal, accept long sentence instructions. Thus, they use instructions summarising the known function of the test set protein and ask the various models to design 5 proteins based on the long sentence instructions.

To evaluate how well the sequence and structure of the predicted proteins matches their description, the researchers used ProTrek (which, as mentioned above, can derive textual information from sequence and structural input). “For both sequence and structure prediction, the authors reported the maximum and mean scores among the 5 generated proteins from language instruction.”

Notably, the researchers found that the ProTrek scores (i.e. the alignment of language instruction with the sequence and structure of the designed proteins) of proteins designed by ProteinDT and Chroma shows no significant difference compared to arbitrarily selected proteins from Swiss-Prot (see figure). In other words, the likelihood with which these design tools predict functional proteins from text descriptions is no greater than chance. In contrast, Pinal produces ProTrek scores that are almost as high as those based on the known pairings of description with sequence and structure of the protein test set (= ground truth scores).

The authors also evaluate the foldability of the predicted proteins using AlphaFold. They found that proteins designed by Pinal exhibit foldability scores comparable to protein sequences from well-characterised proteins from the Swiss-Prot database. This indicates that the Pinal design tool predicts proteins with good structural plausibility. In contrast, sequences from Chroma and ProteinDT often failed to fold into 3D structures.

Comparison of Pinal and ESM3

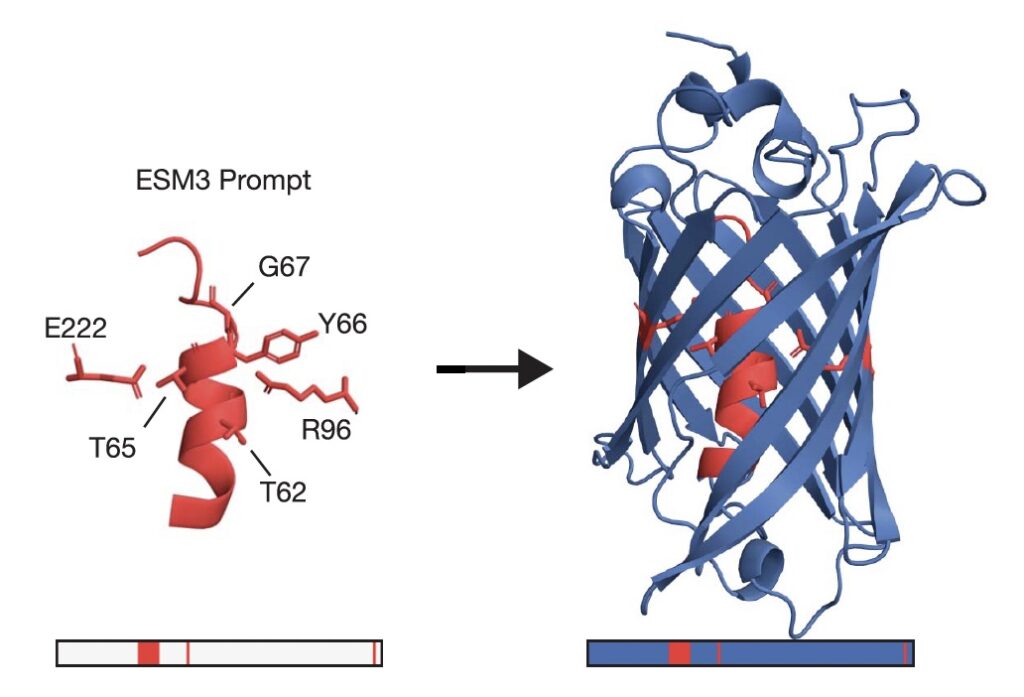

ESM3 is another recently published large language model-based protein design tool. In ESM3, the three protein modalities sequence, structure and function (in the form of keyword text information) can be interchangeably used as input or desired output. In the application examples provided in the original paper, the authors used functionality keywords, sequence information (e.g. the desired length of the designed protein), plus structural coordinates as input.

For instance, to design novel green fluorescent proteins, the authors used as input prompts “fluorescence” (as the functionality keyword), a protein length of 229 amino acids (as sequence prompt), and as structural prompt, the identity and position of the six amino acids critical to form the chromophore in the native Green Fluorescent Protein from jellyfish, as well as the structural arrangement of these amino acids, as shown below.

Protein design prompt (left) used to generate the synthetic GFP candidate on the right of the image.

Native jellyfish GFP consists of a beta barrel that forms a cage to facilitate the formation of the chromophore in its interior. ESM3 designed a protein with a similar overall structure and spectrometric properties related to known natural fluorescent proteins.

The designed protein sequence was 58% identical to the closest natural fluorescent protein. Based on this, the authors determined that this difference in protein sequence “represents an equivalent of >500 million years of evolution from the closest protein that has been found in nature”.

To compare the Pinal protein design model to ESM3, the developers of Pinal used keywords for a fair comparison, given that ESM3 only recognises keywords but lacks understanding of natural language.

The researchers found that Pinal achieves a higher ProTrek score, indicating better alignment of the predicted sequence and structure of the designed proteins with the language instruction. Furthermore, while half of the proteins from Pinal exhibit predictable functions, only around 10% of the proteins generated by ESM3 do so. Finally, the proteins designed by Pinal also exhibit better foldability.

The researchers also carried out laboratory experiments to validate Pinal. They gave Pinal the prompt “Please design a protein that is an alcohol dehydrogenase”, and then evaluated whether the protein designs predicted by Pinal were functional. Impressively, five of the eight selected alcohol dehydrogenase sequences could be successfully expressed and purified, and exhibited the ability to oxidise ethanol to produce NADH from NAD+ in an enzyme activity assay.

Why have I been so keen to learn more about these protein design tools? The main reason is that these models might be an excellent teaching tool to let students learn by doing original and potentially exciting research. Similar to the described validation experiments, students could design novel proteins, evaluate these designs computationally, and eventually produce these proteins and test their activity in laboratory experiments.

What makes this type of lab practical exciting for students is firstly that the students can decide by themselves what kind of novel proteins they want to design. Secondly, the outcome of the experiments is unknown, which resembles what real research is like.

Moreover, this kind of activity is also useful as students get to solve real problems along the way. As such, I am currently trying to find a way to conduct these activities in a postgraduate course next year.

HIGHLIGHTS FOR WEEK OF 13 – 19 OCTOBER

I recently realised that five years ago I wrote two research updates on sugar metabolism that I never published. In order to not let them go to complete waste, I decided to publish them in my weekly highlights. Here is the first.

5 July 2020

New Research: How bad is fructose for us?

New Research: How bad is fructose for us?

Recently, I came across a number of interesting papers on fructose. Fructose is commonly taken in as table sugar, high-fructose corn syrup and also as fruits. Table sugar, or sucrose, is a dimeric sugar consisting to equal parts of glucose and fructose. High-fructose corn syrup, which is added to coke and other soft drinks, contains 55% fructose and 45% glucose.

First, some not-so-fun facts about fructose and sugar in general from Veronique Douard and Ronaldo Ferraris review on fructose transporters. Centuries ago, sugar used to be much more expensive. And so in 1700, Europeans consumed only about 5 grams of sugar per day. In 1950 it was 120 grams per day and today it is 180 grams per day on average. If we consider that 1 gram of sugar has 3.9 calories, then an average person consumes about 700 calories of sugar alone. That is about a third of the recommended calorie intake (which is 2000 calories for women and 2500 for men). So one can imagine how many calories we can save if we just stop to eat and drink sugar. One can of coke alone contains 39 grams of sugar, of which half is fructose.

There are some important differences between glucose and fructose. While glucose can be taken in and used by all cells in our body, fructose is only metabolized in the liver. This is because only the liver has the necessary transporters and enzymes to utilize fructose. (The intestine also expresses fructose transporter proteins in order to absorb fructose from the food to then be transported into the liver.) If we take in a lot of fructose with a meal or sugary drink, one can hence imagine that the liver as the only fructose-metabolizing organ is overwhelmed. The available fructose exceeds the needs of liver cells for energy production and hence, the liver cells are unable to utilize all the fructose. The excess fructose is converted into lipids, or fat, which ends up in the liver (as fatty liver) and other organs.

What is also different between glucose and fructose is that glucose metabolism is highly regulated. As a result, cells only take in glucose if they are in need of it, for instance to produce energy. Fructose metabolism, on the other hand, is not subject to such regulatory mechanisms. Hence, liver cells will continue to take up fructose beyond their needs, leading to more lipid accumulation.

Evolutionary, when our ancestors consumed lots of fruits, conversion of fructose into fat was a beneficial mechanism, because the stored fat allowed humans to survive during periods of food shortage. However, this survival mechanism has turned into a metabolic liability in modern times, where we consume vastly increased amounts of fructose.

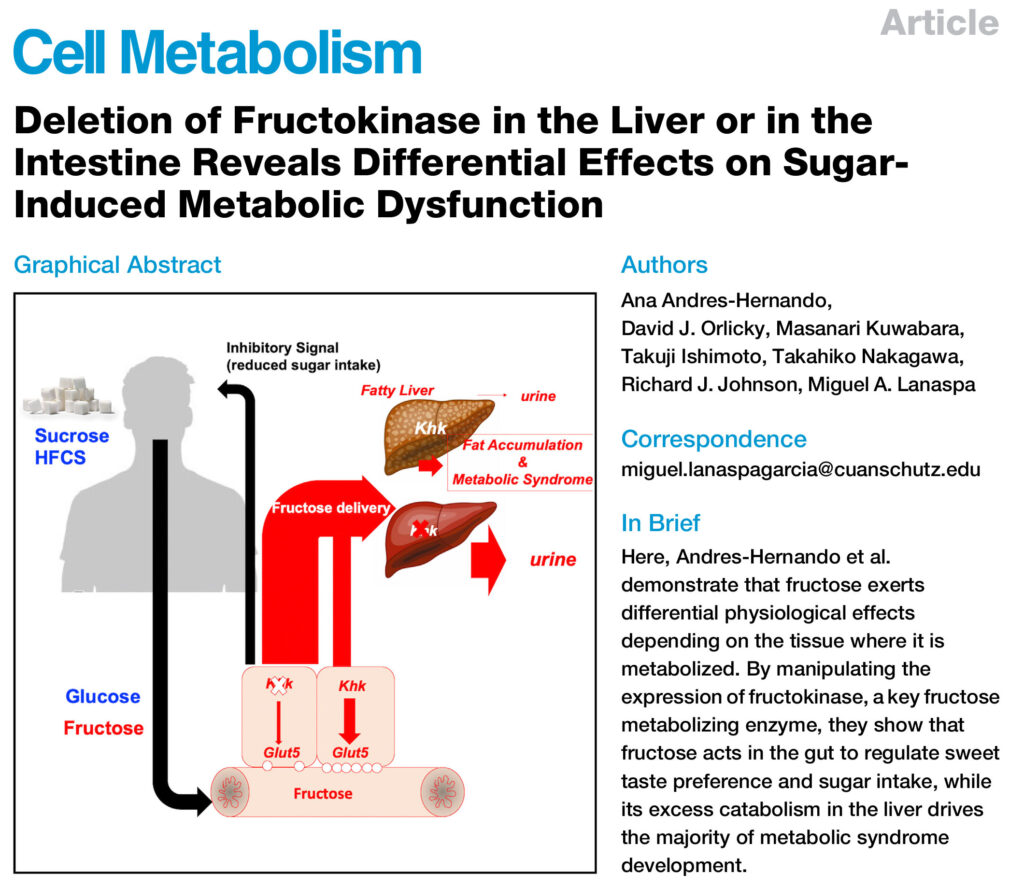

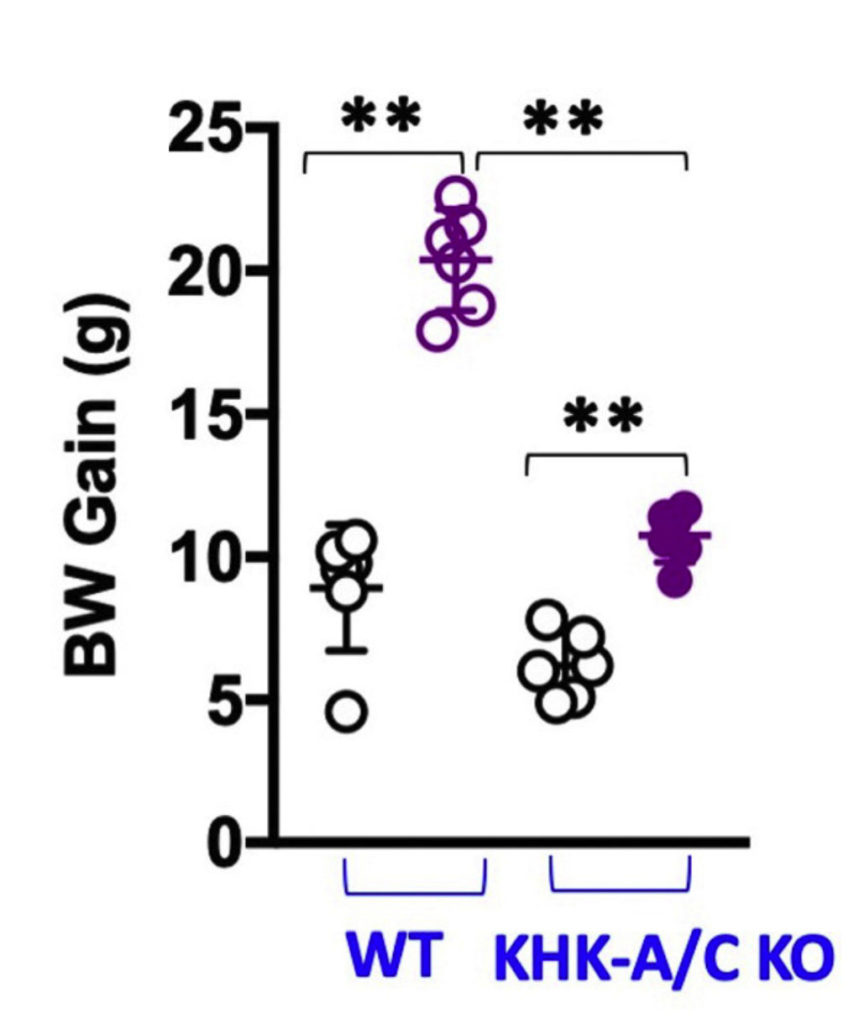

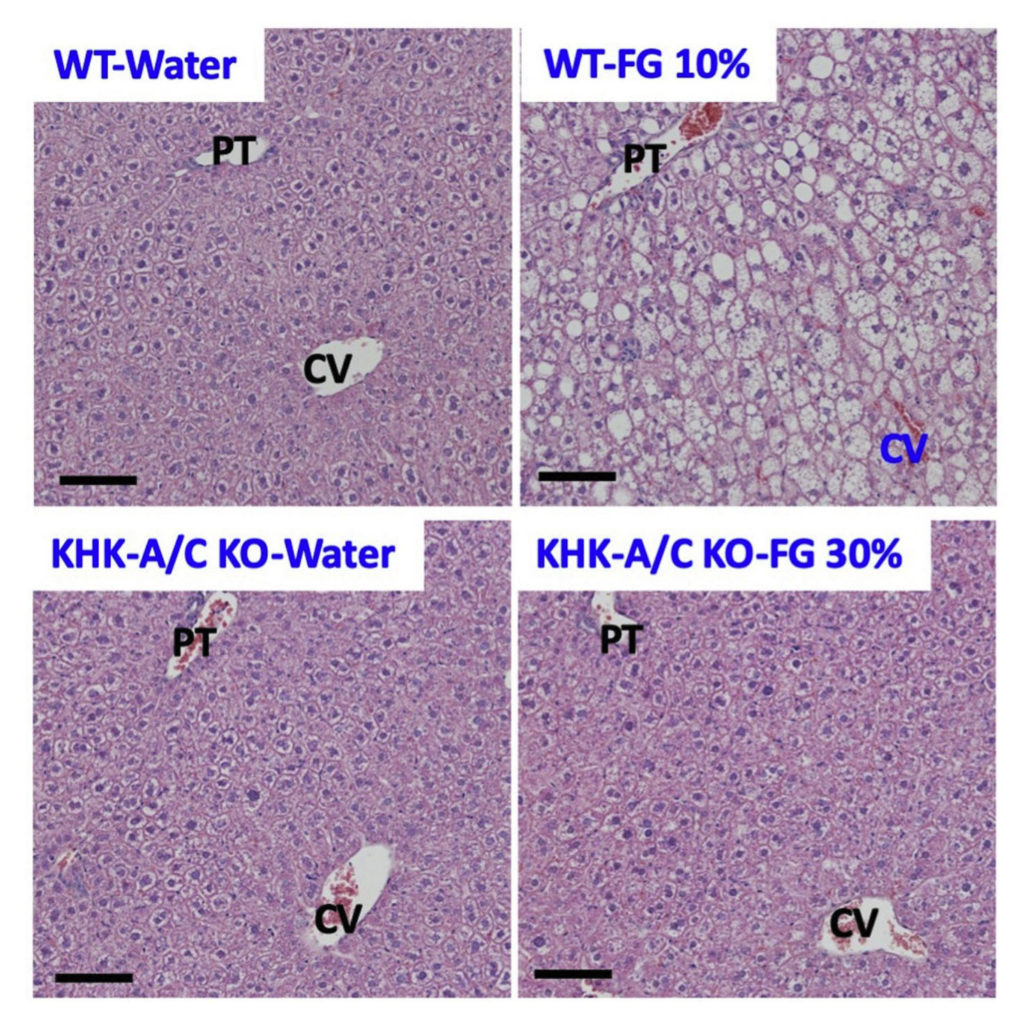

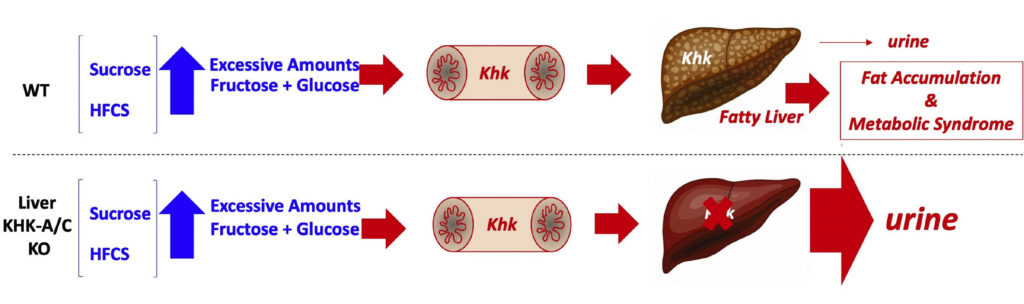

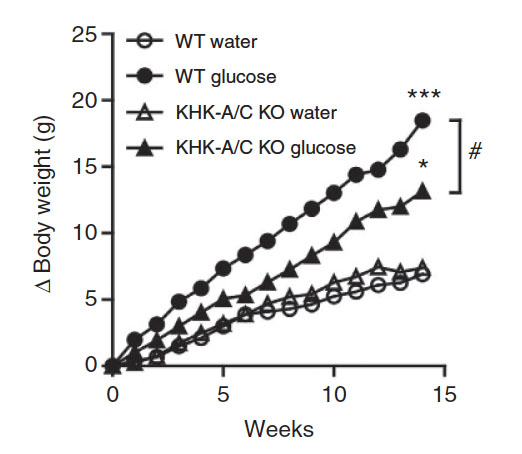

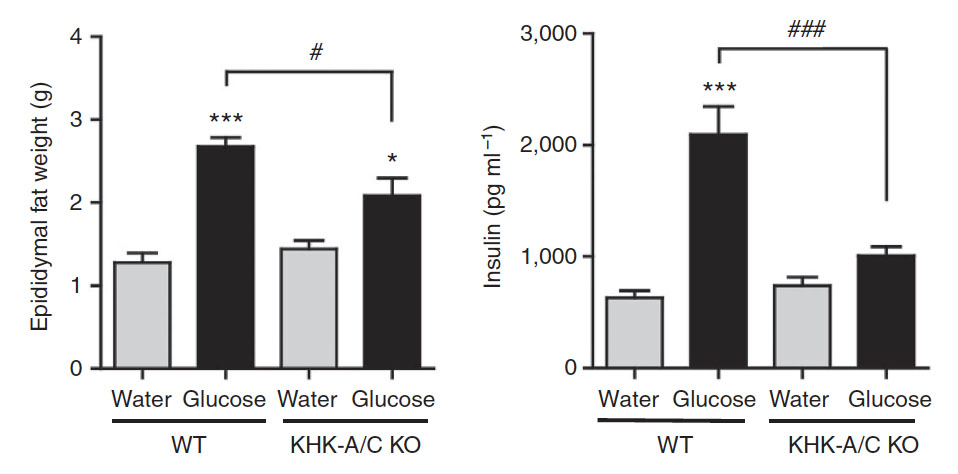

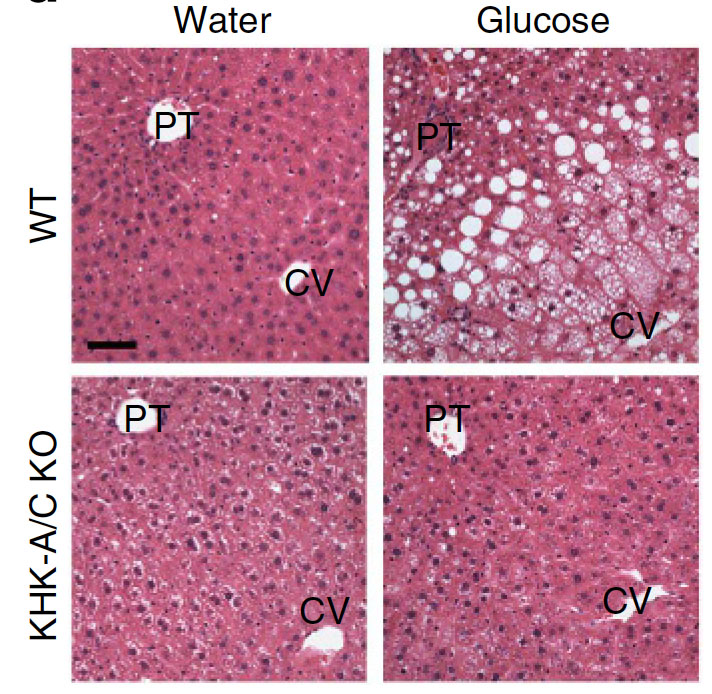

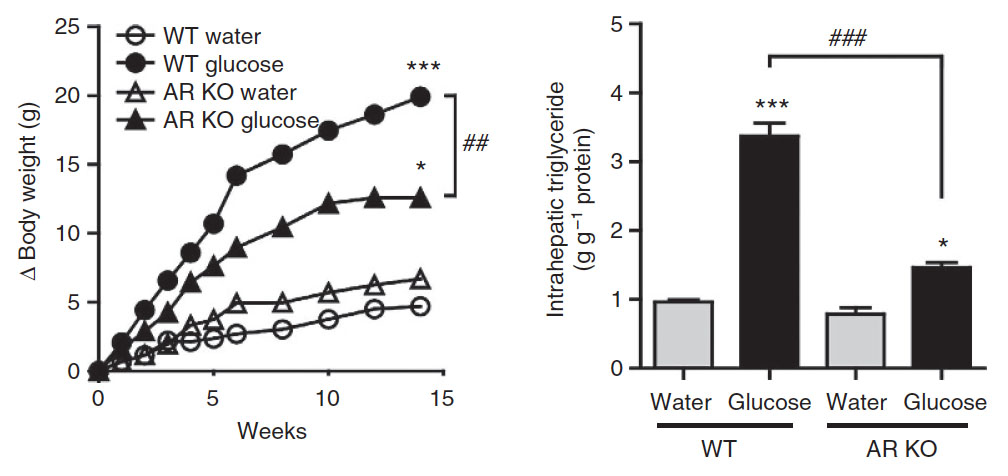

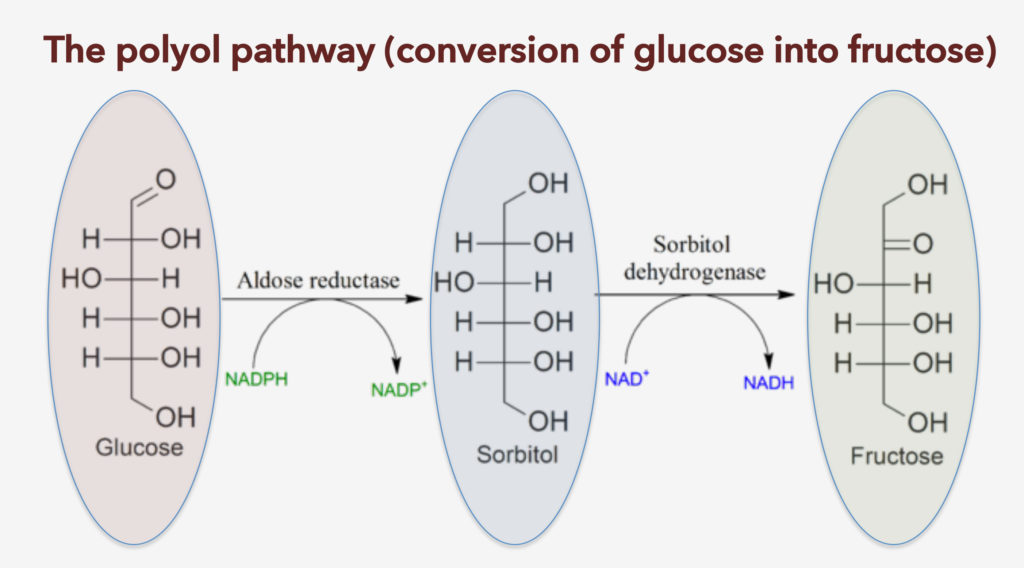

In one of the papers published by Andres-Hernando et al. in Cell Metabolism in July this year, the authors looked at the role that fructose has on the sugar-induced metabolic syndrome. The metabolic syndrome comprises of a set of symptoms (central obesity, elevated fasting blood glucose concentrations, high blood pressure, elevated blood triglyceride concentrations, elevated blood cholesterol levels), and sugar increases all of them. The paper has a number of interesting findings:

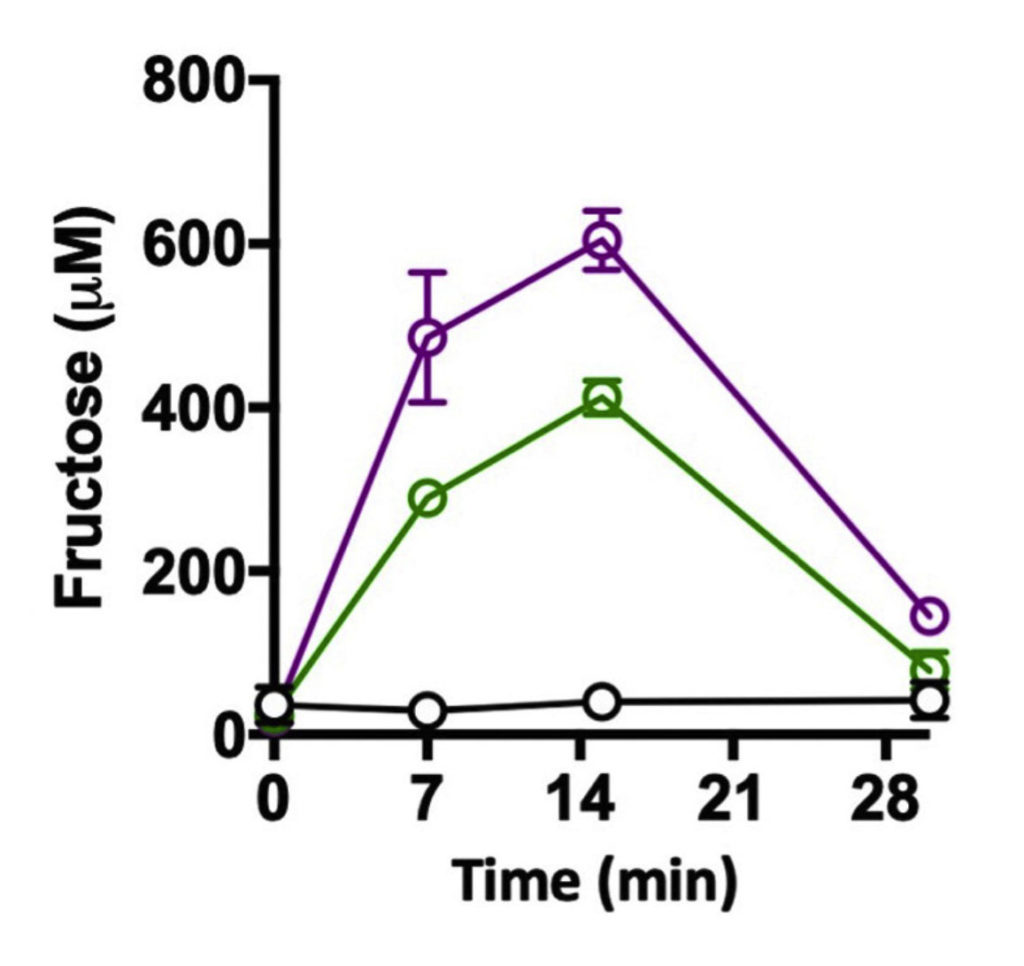

Firstly, the authors found that glucose stimulates fructose uptake (see the figure below). What that means is that the combination of glucose and fructose is particularly bad. (I initially thought that this may explain why fruits, which contain lots of fructose, are generally considered healthy. However, I realized that fruits also contain both fructose and glucose at a ratio of about 2:1. Hence, there must be other reasons why fruits are good for us despite being rich in sugar, which probably has to do with the high fiber content.)

Fructose levels in portal vein blood in mice after giving the mice water (black), fructose alone (green) or the same amount fructose together with glucose at an equal ratio (purple).